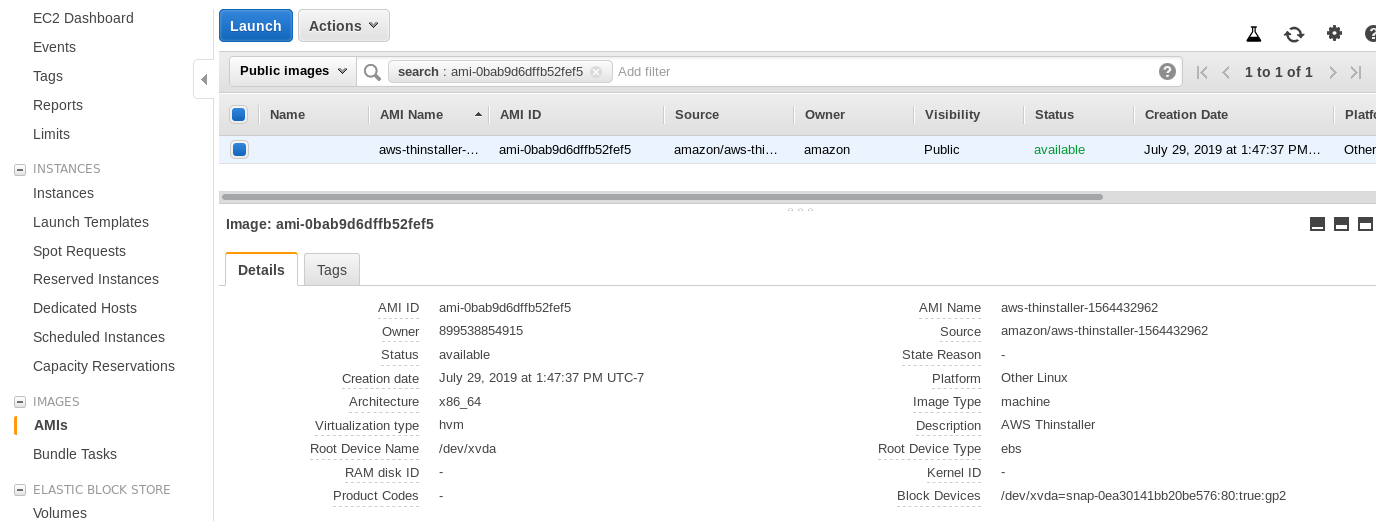

Here are the setup methods for two typical deployment types:Ĭopy the script to the virtual machine (VM) into a suitable directory and make sure it is executable for the user who will be running it: Stringdate = $( echo " $line " | awk '' | sed 's/^*//' ) if thenĪws s3 rm "s3:// $S3_BUCKET / $filetoremove " fiĮcho "PSQL database backup: ' $backup_name ' failed" exit 1ĭepending on your deployment setup, there will be different ways to deploy the script to run the backup job regularly. #! /bin/sh # PSQL Database Backup to AWS S3 echo "Starting PSQL Database Backup." # Ensure all required environment variables are present if || \ || \ || \ || \ || \ || \ || \ || \ || \ then >&2 echo 'Required variable unset, database backup failed' exit 1įi # Make sure required binaries are in path (YMMV) export PATH =/usr/local/bin: $PATH # Import gpg public key from env echo " $GPG_KEY " | gpg -batch -import # Create backup params backup_dir = $( mktemp -d ) backup_name = $POSTGRES_DB '-' $( date +%d '-'%m '-'%Y '-'%H '-'%M '-'%S ).īackup_path = " $backup_dir / $backup_name " # Create, compress, and encrypt the backup PGPASSWORD = $POSTGRES_PASSWORD pg_dump -d " $POSTGRES_DB " -U " $POSTGRES_USER " -h " $POSTGRES_HOST " | bzip2 | gpg -batch -recipient " $GPG_KEY_ID " -trust-model always -encrypt -output " $backup_path " # Check backup created if thenĪws s3 cp " $backup_path " "s3:// $S3_BUCKET " status = $? # Remove tmp backup path rm -rf " $backup_dir " # Indicate if backup was successful if thenĮcho "PSQL database backup: ' $backup_name ' completed to ' $S3_BUCKET '" # Remove expired backups from S3 if thenĪws s3 ls " $S3_BUCKET " -recursive | while read -r line do So, the S3 policy JSON attached to the IAM user might look like: The script requires, list, put, and delete access on the s3 bucket. Create IAM UserĬreate an IAM user in your AWS account with access to the S3 bucket created above: AWS 'create user' guide While there are plenty of different backup rotation schemes, 'first in first out' scheme, where old backups are removed as new ones are added, is effective and easy to script for s3 storage.Ĭreate a private Amazon AWS S3 bucket to store your database backups: AWS 'create bucket' guide. One way of doing this is backup rotation.

Therefore, optimise resource requirements by keeping the number of stored backups to a cost-effective level.

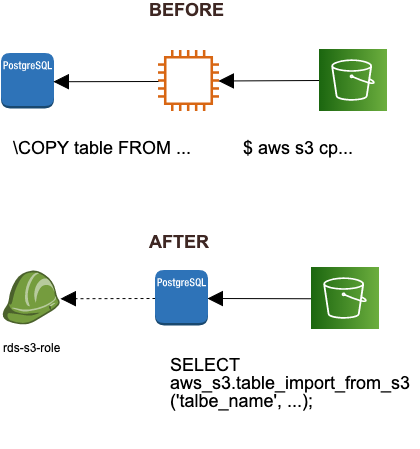

#Aws postgresql s3 software#

GnuPG (GPG) is a commonly used implementation of the PGP cryptographic software suite, which can encrypt database backups. Since these dumps are typically plain text, to prevent sensitive data from being leaked, database backups should be encrypted while being stored in the backup destination.

It tends to be challenging and costly to recover data in these circumstances, so backups must be kept off-site in a backup destination with reliable storage.

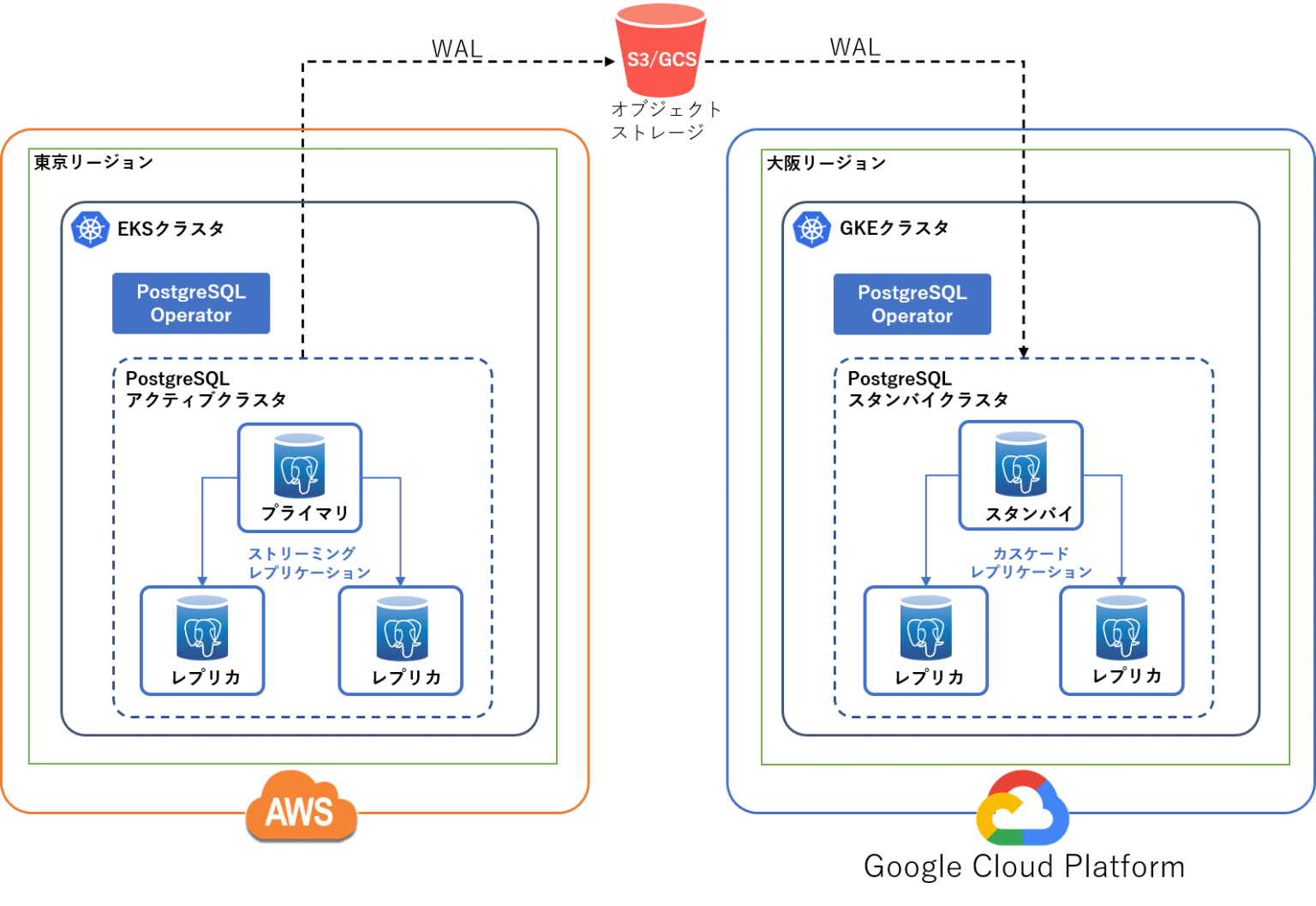

a Kubernetes cluster scheduled using CronJob.a virtual machine scheduled using Linux cron.This article describes a way to set up scheduled rotating encrypted backups of a PostgreSQL database to AWS S3, in two typical deployment environments: It seems like a waste at the outset, and it is easy to put off, however, when things go wrong, you will wish you had it. The argument for spending time setting up database backups is similar to that of paying for insurance.